LAS VEGAS, April 23, 2026 —

Key Takeaways

- Google has committed $175–$185 billion in infrastructure spending for 2026 — nearly double the $91.4 billion it spent in 2025 — as it bets the company’s future on custom AI silicon.

- The new TPU 8i chip delivers 80% better performance per dollar for AI inference than the prior generation, while the TPU 8t achieves 3x the processing power of Ironwood with 9,600 chips scaling to 121 exaFLOPS in a single superpod.

- Google CEO Sundar Pichai revealed that 75% of all new code at Google is now AI-generated and reviewed by engineers — a striking internal signal of how far the company has taken its own technology.

What Google Announced at Cloud Next 2026

Google’s annual cloud conference, held this week in Las Vegas, was not a product reveal. It was a declaration of intent. Two days of announcements stacked from chip architecture to enterprise software added up to a single message: Google is no longer competing in AI. It is trying to own the entire stack.

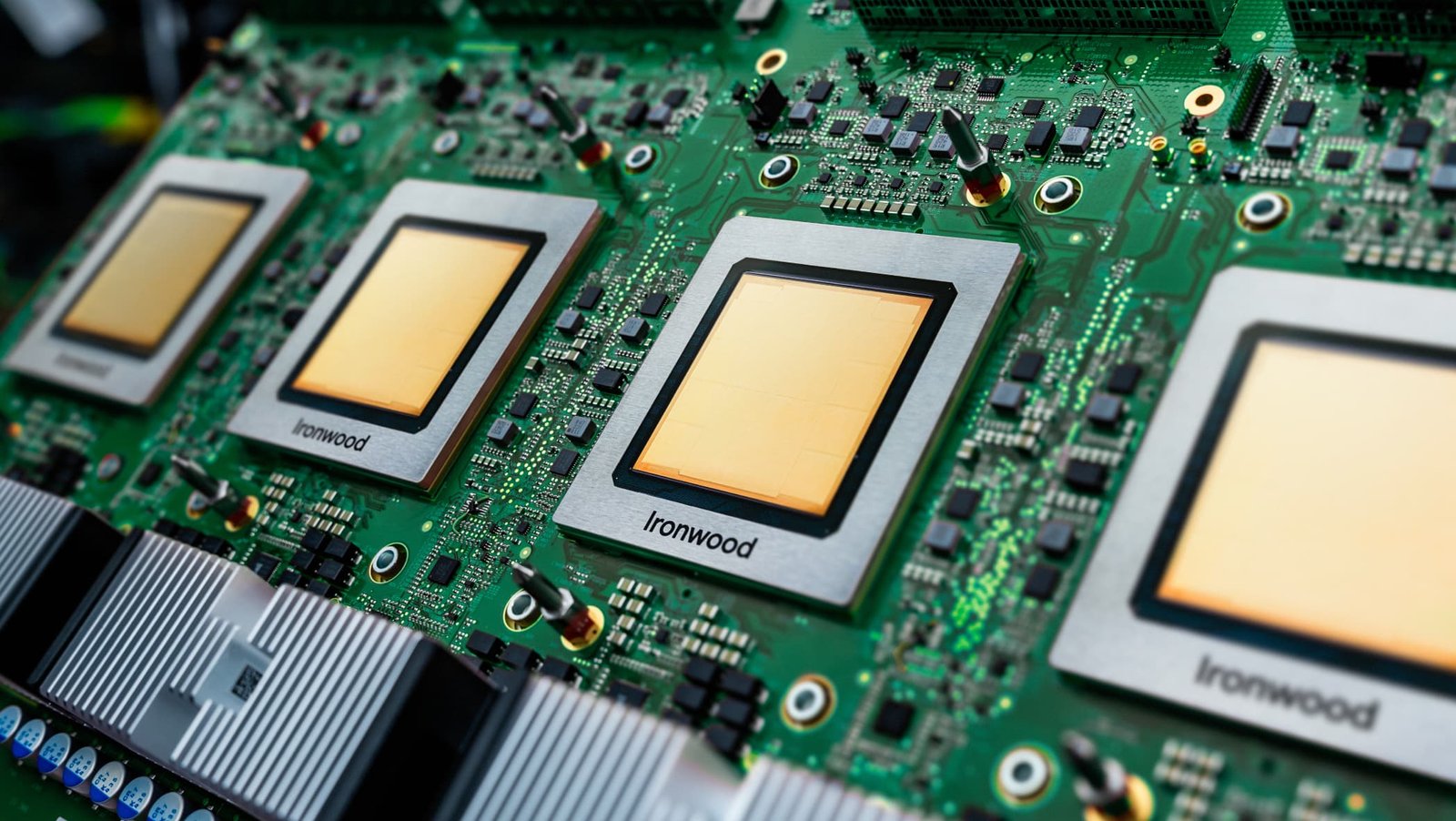

At the center of the announcement was the general availability of Ironwood, Google’s seventh-generation Tensor Processing Unit — a chip it describes as “the first Google TPU for the age of inference” — alongside a preview of its eighth-generation architecture, which for the first time splits into two purpose-built chips: TPU 8t for training and TPU 8i for inference, both built on TSMC 2-nanometer process technology and targeted for late 2027.

Why Two Chips Instead of One?

The split matters because training and inference are fundamentally different computational workloads. Training a model requires brute scale — moving enormous datasets through billions of parameters. Inference is what happens when users actually use AI: rapid, low-latency responses delivered at massive concurrency. Until now, most chips tried to do both adequately. Google is betting on doing each excellently.

TPU 8t is designed to scale to 9,600 chips in a single superpod, providing 121 exaFLOPS of compute and two petabytes of shared memory, with Google projecting the ability to turn months of AI model training into weeks using clusters of over one million TPUs.

TPU 8i triples on-chip SRAM to 384 megabytes, increases high-bandwidth memory to 288 gigabytes, and introduces a dedicated Collectives Acceleration Engine that reduces on-chip latency by up to 5x — specifically engineered for the kind of concurrent agent workloads that are now driving enterprise AI adoption.

The Gemini Platform Rebranding — and What It Means for Businesses

| Old Product | New Name | Function |

|---|---|---|

| Vertex AI | Gemini Enterprise Agent Platform | AI agent build/deploy/govern hub |

| Agentspace | Absorbed into Gemini Enterprise | Internal agent discovery and registry |

| Individual agent tools | Agent Gateway | Security, monitoring, governance layer |

| Workspace AI features | Workspace Studio | No-code agent builder for business users |

Google Cloud CEO Thomas Kurian framed the platform strategy explicitly: the company wants enterprises to have “chips that are designed for models, models grounded in your data, agents and applications built with those models” — all secured by the same infrastructure. No other competitor, Kurian argued, controls the full vertical from chip to application.

Google Cloud currently holds roughly 11% of the cloud infrastructure market, compared to AWS at 31% and Azure at 25%. The announcements this week will not close that gap immediately. But in the emerging market for enterprise AI agents — where inference cost, latency, and governance matter more than raw model benchmarks — Google’s integrated approach is designed to reshape the competitive dynamics.

The Anthropic Connection — and What It Says About the AI Chip War

Anthropic, Google’s most important cloud client, has committed to up to one million Ironwood TPU units in 2026, with the deal already expanding to 3.5 gigawatts of compute coming online in 2027 — positioning it as the anchor customer for both the seventh and eighth generations of Google’s custom silicon.

The arrangement illuminates a broader shift: the frontier AI companies that once treated cloud providers as commodity infrastructure are now becoming their most strategically significant customers. Google projects 4.3 million TPU shipments in 2026, rising to 10 million in 2027 and more than 35 million in 2028. Combined with Microsoft, Meta, and Amazon, total big tech AI infrastructure spending is approaching $700 billion this year — a figure with no precedent in the history of computing investment.

For anyone who has wondered whether the AI boom is a cycle or a structural shift, Google’s $185 billion infrastructure commitment is the clearest answer the industry has offered yet. Jensen Huang’s Nvidia still dominates today’s GPU market. But Google is building the infrastructure for the market it believes comes next — and it is spending like it intends to win.