ZURICH / WASHINGTON, April 26, 2026 —

Key Takeaways

- Sony AI’s robot Ace — published in the journal Nature this week — became the first autonomous system to defeat elite human athletes at a physical sport under official competition rules, winning 3 of 5 matches against elite players who average 20 hours of training per week and have played for over a decade.

- Ace tracks the ball’s position in 3D space 200 times per second and measures spin using several hundred sensor readings per second — reacting in roughly one-tenth the time a human player would need to respond to an incoming shot.

- After further improvements, Ace competed again in March 2026 — winning at least one match against each of three professional players, a result that would have been considered impossible by most roboticists just five years ago.

What Ace Actually Is

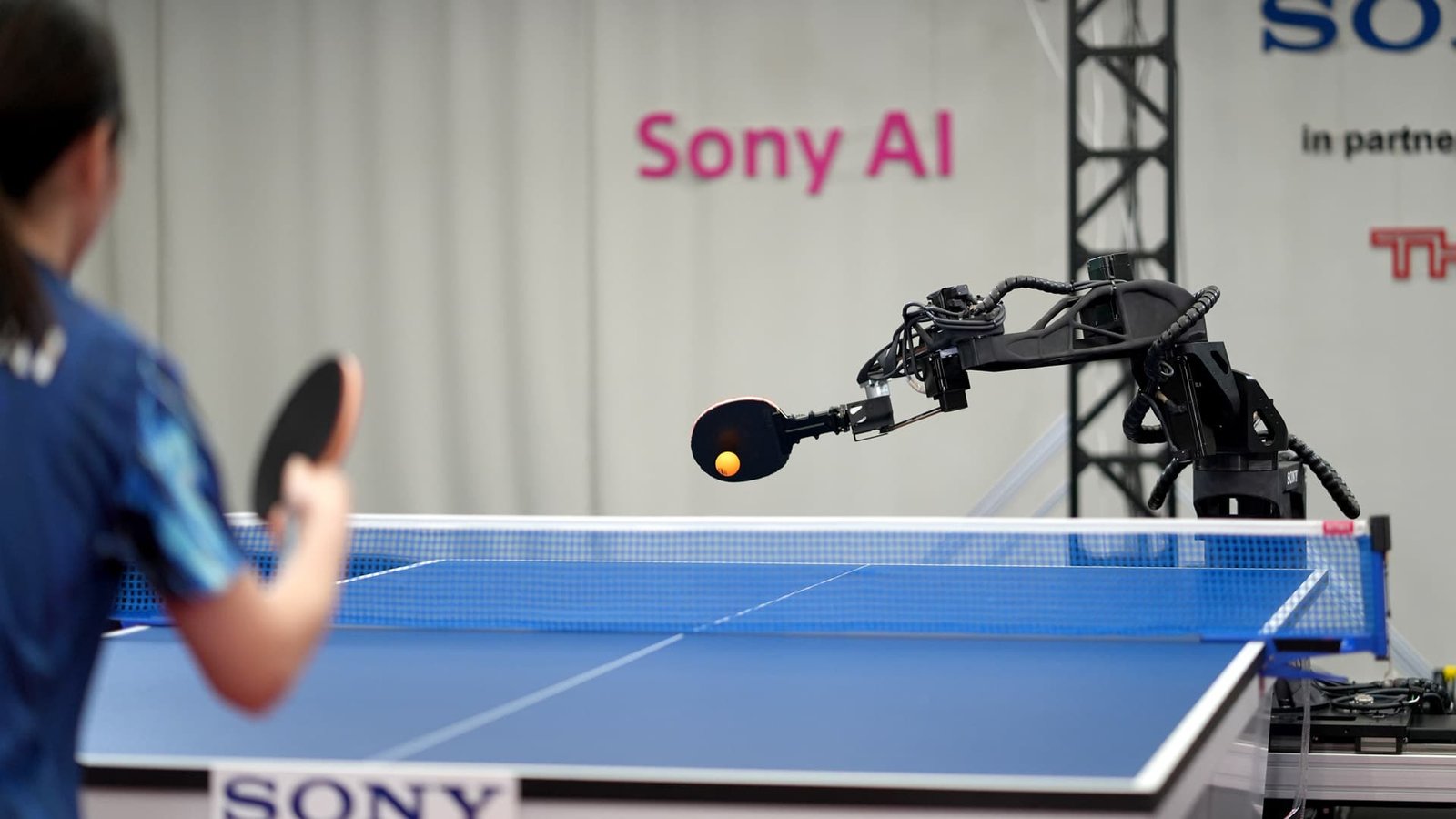

Ace is not a humanoid robot. It does not have legs or a face. It does not walk to the table and shake hands with its opponents. It is a stationary system purpose-built for one task — and it executes that task at a level no machine has achieved before.

The system consists of nine cameras, three event-based vision sensors, an eight-axis robotic arm, and an AI control system built on deep reinforcement learning. The ball’s position is calculated in three dimensions 200 times every second. Its rotation — the spin that makes table tennis so unpredictable and so difficult to master — is estimated using several hundred measurements per second. The reaction time from perception to arm movement runs at roughly one-tenth of the window available to a human player.

Unlike previous robotic table tennis systems, which operated under simplified rules, on smaller tables, or only against amateur opponents, Ace was tested under full official International Table Tennis Federation competition rules. Full-sized table. Regulation ball. No concessions to the machine. The researchers at Sony AI, based in Zurich and led by roboticist Peter Dürr, describe Ace in their Nature paper as the first real-world autonomous system competitive with elite human table tennis players.

How It Performed — Against Real Opponents

| Match Type | Opponents | Matches Played | Matches Won | Games Won |

|---|---|---|---|---|

| vs. Elite amateurs (20+ hrs/week training) | 5 players | 5 matches | 3 | 7 of 13 games |

| vs. Professional T-League players | 2 players | 2 matches | 0 | 1 of 7 games |

| vs. Professionals (after upgrades, March 2026) | 3 players | 3 matches | At least 1 each | Not fully disclosed |

Against elite amateur players — people who have played competitively for over a decade and train approximately 20 hours a week — Ace won three out of five matches. That is not a narrow victory. It is a dominant performance against opponents who would beat the overwhelming majority of casual players without difficulty.

Against Japan’s T-League professionals — among the best players in the world — Ace lost both matches but won one game against a professional named Minami Ando. For context: winning a single game against a professional in table tennis is a result that most competitive amateur players never achieve in a lifetime of play.

The March 2026 follow-up — in which the upgraded Ace won at least one match against each of three professionals — is the result that has the robotics community most energized. It suggests the system is not a proof-of-concept that plateaued. It is a system still improving.

Why Table Tennis Is So Hard for Machines

AI systems have beaten humans at chess, Go, poker, and StarCraft II for years. Those victories, while technically impressive, share a common characteristic: they happen in controlled digital environments where all relevant information is available, the rules are fixed, and physics does not intervene unpredictably.

Table tennis is categorically different. The ball travels at speeds exceeding 30 miles per hour. It carries spin that alters its trajectory in ways that depend on the precise angle, speed, and contact point of the last shot — variables that must be estimated in real time from a moving target. The playing surface introduces friction. Air resistance matters. The opponent’s body position, grip, and swing all carry information about what the next shot will do before it happens — and elite players read those signals unconsciously after years of training.

Building a machine that can perceive all of that, process it, and respond with a physical arm in under a tenth of a second — and do it consistently, across multiple games, against trained athletes who are actively trying to beat it — is a fundamentally harder problem than defeating a human at chess. Ace is the first system to demonstrate it is solvable.

The Technique That Made the Difference

Ace did not win by hitting harder than its human opponents. Analysis of its matches showed that the system’s decisive advantage was spin detection. Table tennis points are often won or lost not on speed but on the ability to read and return heavily spun shots — topspin, backspin, sidespin — that arrive fast and break sharply. Ace successfully returned 75 percent of spinning balls across a wide range of spin types, a return rate that exceeds what most human players can achieve against elite opponents.

The system learned to do this not from human demonstrations — Ace was not trained by watching expert players and copying their technique. It learned from scratch through reinforcement learning, a process in which the AI attempts actions, receives feedback based on whether those actions succeeded, and gradually builds a strategy from millions of iterations of trial and error. The resulting technique is distinctly non-human in its mechanics. Ace does not play the way a trained athlete plays. It plays the way a machine that has optimized purely for winning plays — and against most human opponents, those two things are now producing similar results.

What This Means Beyond Ping-Pong

The significance of Ace extends well beyond the sport it was built to play. Table tennis was chosen specifically because it represents the hardest combination of challenges for physical AI: extreme speed, unpredictable spin, real-world physics, and an adversarial opponent who adapts in real time. Solving it demonstrates capabilities that transfer directly to robotics applications with far broader stakes.

Surgical robotics requires the same combination of high-speed perception, fine motor control, and real-time adaptation to unpredictable physical conditions. Industrial assembly — fitting components that vary slightly from unit to unit, under time pressure, without human supervision — requires the same loop of perception, decision, and precise movement. Search and rescue robotics operating in unstructured environments requires the ability to make fast physical decisions based on incomplete information.

Ace did not solve any of those problems directly. But it demonstrated, under peer-reviewed and publicly observable conditions, that the underlying AI architecture — event-based vision combined with deep reinforcement learning — can operate at speeds and accuracy levels previously thought to require human-level biological hardware.

The next question is not whether AI can compete physically with humans. Ace settled that. The question is which domains it reaches next — and how fast.